I have been looking at ways to reduce content duplication and content theft, but cannot seem to find a way to prevent websites like AbsorbTheWeb.com from copying all my content (and ranking above me). I wrote about RSS content theft a few weeks ago and have been unable to stop it. I attempted to block the IP address but the scraper software must be somewhere else.

Today I have installed the Yoast WordPress SEO Plugin as it allows you to set some code in your RSS feed. In my case I have added links to the top and bottom of each feed item.

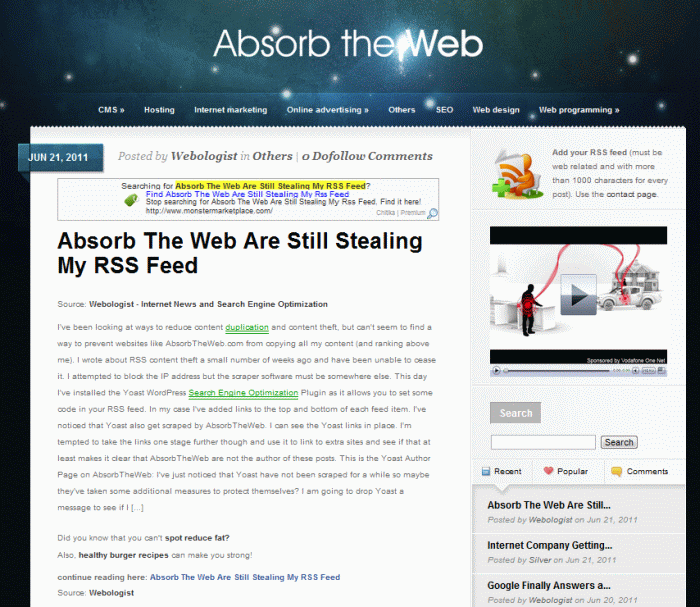

I have noticed that Yoast also get scraped by AbsorbTheWeb. I can see the Yoast links in place. I am tempted to take the links one stage further though and use it to link to extra sites and see if that at least makes it clear that AbsorbTheWeb are not the author of these posts.

This is the Yoast Author Page on AbsorbTheWeb:

I have just noticed that Yoast have not been scraped for a while so maybe they have taken some additional measures to protect themselves? I will drop Yoast a message to see if I can find out.

This whole issue has been really frustrating for me. I can see that other sites have also been copied by AbsorbTheWeb but most outrank them so that they appear first for their content.

Google Tackles Content Scrapers in Panda Version 2 Point Something

I have heard that Google is hoping to tackle scrapers in the next update of the Panda layer of the search algorithm. That is good news, but according to Barry Schwarz Panda 2.2 was rolled out last week – see Official: Google Panda Update 2.2 Is Live for news on this.

Now, the last time Panda was rolled out, or the first time, it was in 2 tranches. Maybe I am still getting outranked by a scraper because these sites are not part of the last week’s Panda update? Let’s hope so.

Some Other Sites Scraped by AbsorbTheWeb (ATT):

Here is a little round up of other sites I see on AbsorbTheWeb. I really like a lot of these sites and it makes me feel a little chuffed that AbsorbTheWeb felt that I was good enough to be a part of the gang!

These are not in any particular order:

- www.nodalbits.com – They often rank below ATT for title searches. It is run by Chris Silver Smith who describes himself as a technologist and marketer.

- www.estherckane.com – Esther Kane’s Internet Marketing blog. Her latest blog on NEUROMARKETING ranks first, 2 spots above ATT.

- www.dhcommunications.com – This is Dianna Huff’s B2B Web marketing and consulting blog. Her post on the Panda update, The Panda Update: Unique Content Rules, People, ranks first.

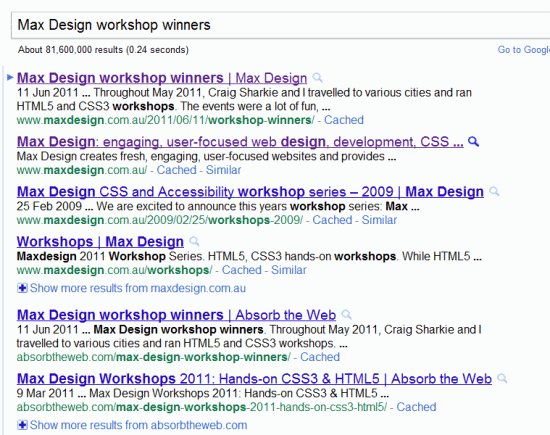

- www.maxdesign.com.au – This is the web design business run by Russ Weakley. Their content out ranks ATT, e.g. Max Design workshop winners ranks first with the following 3 spots also taken, then ATT rank next in 5th and 6th place. Time for another screenshot:

Max Design versus AbsorbTheWeb in Google Search

Nice to see that Max Design are looking strong here.

Nice to see that Max Design are looking strong here.

I suspect that the real problem is poorer “SEO” on my part. But that really is no excuse. Not many sites really work hard at SEO. I certainly do nothing on this blog, I just write about it here, based on what I learn from managing and promoting other websites.

But that is my concern – what about all those great little sites out there that should be ranking in first place for a whole range of keyword phrases, but are not because of ruthless content scrapers? How many small businesses never get off the ground and fail to understand why? Even those that are looking stronger, such as Max Design in Australia, may be losing some new business as a result of these tactics.

Unless a website has permission it is illegal in most countries to take their content and republish it. In the UK web content is covered by UK copyright law. However, enforcing that law is not easy.

Google do provide tools to help remove the content from their search index, such as the DMCA tool and Spam Reports, but those are only useful if at first you know that there is a problem and then actually have time to do something. I certainly do not have time to sit here and file DMCAs all day long to try to remove one content thief. If I did they would probably just start performing the same thing from another site.

In fact, if you look at the server where ATT is hosted, it is full of sites all doing the exact same thing. For example, this BING search – http://www.bing.com/search?q=ip%3A74.52.14.173 – shows all the websites on the same server as ATT. For example sportishblog.com has the same attribution link down the bottom right for its articles. On “Much still remains to be done to ensure global food security” you can see the text down the bottom right:

The post, titled “Much still remains to be done to ensure global food security”, has been written by Armenian News | Details – PanARMENIAN.Net and is pulled with RSS from the site Armenian News | Details – PanARMENIAN.Net.

The original article is here: http://www.panarmenian.net/eng/details/72827/

It seems that this is a very common method of creating content, and one that so far has escaped Google’s “Farmer” update. Really Google should have focused on these sites before putting its more aggressive quality controls in place. Much of the criticism about Google’s Panda updates has been that scraper sites rank above the original content.

This proves that SEO still works if you know what you are doing. And credit to AbsorbTheWeb, as they must know what they are doing to be able to rank well using nothing but duplicate content. According to Yahoo backlinks, they only have 533 links to the whole domain too, which is fewer than Webologist has! They do have links on Wikipedia and SEO By The Sea though which may explain some of their strength.

Right, I am now about to hit publish. Will then check if the Yoast plugin inserts my credits for me.

Update – Webologist Copied Again – Yoast Links In Place

Well, I just checked Google and I am not there, but this is. The Yoast “cheeky links” are in place though. Although I really do not think that they will ever carry any value, in fact, I would be even more upset with Google if they did!

Here is the Google search for this article:

Only uncovered one comment link on SEObytheSea to AbsorbTheWeb. Not sure that particular link actually passed along much value, but it’s now gone. If you’ve seen more, please let me know.

Have you considered sending an email to the site owner, asking them to stop. If they refuse to, or don’t respond, the next step would be to contact their host. If that doesn’t work, a DMCA request to Google & Bing might be a next step.

Hey Bill, just one comment? They must have some tricks up their sleeves. Maybe it is sheer volume on targeted, fresh content. They seem to have hand picked a relatively small number of sites, surprised they picked me. Maybe they only picked sites which they thought would not notice!

Thank you for talking about this topic. I positively HATE Absorb the Web and am pissed that they duplicate my content. Sometimes their duplicate content ranks above my original content.

There must be a way we can alert Google to this problem.

Also, do you know anything about new “author” tag?

Hi Dianna. Yeah, I have blogged about the author tag. Basically, on the pages you write you add a link to your author page on the same site, with rel=”author”. But no idea when or if Google will start to use this to rank content.

I also provided some instructions for those that use WordPress to automatically add the author link and tag to posts.

With ATT it must be that they get crawled quicker than us. Looks like Webo has not had any new pages indexed for a few days. Not on though, so many sites must suffer without ever realising why they are failing in search. Google are apparently on the case.

Hey guys.

I am the owner of absorbtheweb.com. Sorry about that …

When I made the site I was still not very experienced and educated in the art of the Internet :). I thought it is OK to copy someone if you put a link to his page and that this way google will rank them before me every time.

Of course I was wrong. I slowly started to understand more and to change my view on the subject. At some point I started to think about stopping the site but I did not want to remove all the comments that are dofollow and therefor waste the time of the people who posted there expecting links. Then I come up with an idea and it is to make the pages noindex, follow, so they do not steel traffic and do not consider duplicate, but still pass value to the dofollow comments.

Absorbtheweb.com is now banned from google though. I guess some of you guys helped for that :). But no problem. If sometimes it returns to google I will open it for guest posts I guess.

Sorry for the trouble I have caused. Live and learn.

Regards.

Hi Nick, you are right, you have been totally dropped. I am sure it did not come from here though (not exactly a lot of people read this blog!).

Since posting there have been some pretty major updates with Google, they are really battling duplicate content, stolen / syndicated content, poorly spun articles etc. etc. Lots of websites that relied only on content from feeds have vanished from the Google Index completely, and even some sites which just had content automatically spun have disappeared too – even sites with good backlinks.

Although the comments on your site may be of value Google will probably not index the pages now until they are all unique. If you have a good backlink profile then removing the old content and writing a few new articles could get you ranked again. Maybe start with “How to get your site banned from Google after the Panda Update” and then give some tips on how writing quality and unique articles boosts your PageRank.

Thanks for the tips.

Yeah I could make entirely new site on the domain, but I am not sure if it wont be better to get a new domain, instead of using this that have bad reputation already.

But anyways. I am currently not wasting time on it ;). I have other sites to work on and do not have time to try to revive this one.

Have a nice day 😛

It would be an interesting experiment if nothing else, and should determine if you were dropped based on a Panda algo update or a manual review. If your site was dropped based on the algo change then you could bounce back.

I am pretty sure it is manual. Google algo sucks at this. They do not say it, but they know it :D.